After it became known that Apple also allows employees to listen to audio commands in Siri, Apple is adapting the procedure for improving the language assistant. However, you should pay attention to an essential fineness.

Previously it had become public that at Apple, similar to Google and Amazon, real people listened to audio recordings of voice commands to improve the quality of the speech assistant. Technically, you can’t necessarily criticize that, after all, man-made systems only can be improved by humans. The criticism primarily related to Apple’s communication about this practice and the handling of personal data.

Since Apple does a lot of advertising with the fact that the company attaches great importance to privacy, it was all the more incomprehensible to hear that the 300 employees who listened to the recordings were not directly employed by Apple. They were also the first to be responsible for the situation: Apple dismissed all 300, with one week’s notice. A classic pawn sacrifice, so to speak. Now the data protection company Apple promises that Apple employees will actually take care of the sensitive data.

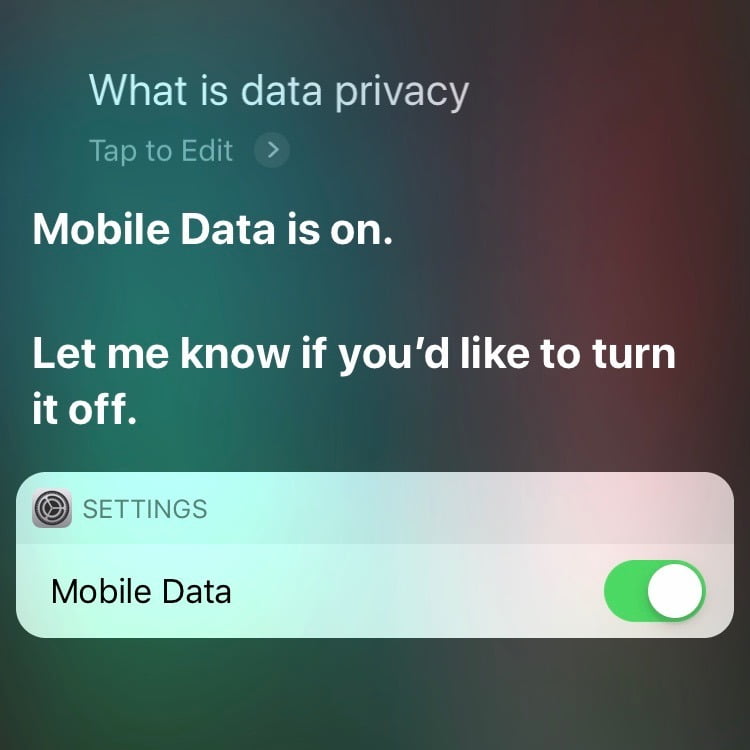

In a press release Apple apologizes to all Apple users and promises improvement in a support document. It is now possible to opt-in to evaluate one’s own audio commands. So you can, if you want to help with the technical improvement, give Apple permission to use your own recordings for it.

Second, users will be able to opt in to help Siri improve by learning from the audio samples of their requests.

This prevents spoken commands addressed to Siri from ending up in any database as audio snippets. What you can’t prevent (unless you don’t use Siri at all) is that there are computer-generated texts that have arisen from the voice commands.

We will continue to use computer-generated transcripts to help Siri improve.

So even if you don’t explicitly allow Apple to use opt-in to have your voice commands evaluated by humans as audio recordings, it happens – just by using an algorithm that first converts the spoken word into text. Thus only the medium changes. After all, most people are concerned that personal statements do not end up in any database, unless you are asked beforehand and agree with what you say (which is necessary for technical progress and not at all to criticize – and will also be supported by us in the personal area).

All spoken word goes as text to Apple.

For personal information, it is of course completely irrelevant whether it is recorded by audio or transcribed and then send into the analysis machinery. You should keep this in mind when using Siri: despite the necessary approval for the original audio recordings, your spoken information will always find its way into the system via text – even without approval. The demarcation and better privacy compared to the language assistants of Google or Amazon seems not as clear as Apple tries to represent it.